The universal translator, large language models and AI enhanced writing.

Welcome to Gradient Descent, a weekly publication on what’s new in the world of A.I. and Machine Learning, no PhD required, brought to you by Astek Alpha.

Like the newsletter? Join our WhatsApp community through this link.

Meta Announces Progress Towards Building Universal Translator

The story:

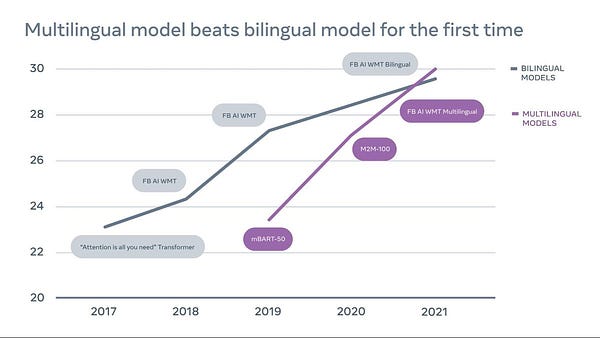

The A.I. research team at Meta (the company formerly known as Facebook), has announced that their team won the infamous WMT machine translation contest, a world premier for multilingual machine translation models that translate without relying on English data.

Not mincing his words, Meta’s CTO Mike Schroepfer touts this victory as an advancement on the path of building a universal translator.

The Meta team elaborates:

“To build a universal translator, we believe the MT (Machine Translation, red.) field should shift away from bilingual models and advance toward multilingual translation — where a single model translates many language pairs at once, including both low-resource (e.g., Icelandic to English) and high-resource (e.g., English to German). Multilingual translation is an appealing approach — it’s simpler, more scalable, and better for low-resource languages.

But until now, this approach couldn’t provide results for high-resource language pairs that were as good as specially trained bilingual models for those language pairs. As a result, delivering quality translations across many languages has generally involved using a combination of individual bilingual models, and low-resource languages have lagged behind.”

Read the full story on the Meta Blog or check out the full submission paper.

Why this matters:

Ideas of building the universal translator aside - which admittingly I would prefer over sticking a Babelfish into my ear - this is a great step forward for machine translation.

On the internet, the amount of monolingual data for any language vastly exceeds the amount of parallel data (text with an available English translation), meaning it’s essential to build MT systems leveraging monolingual data only.

The team’s approach of creating “one model for many languages” will drastically simplify the development of translation systems in real-world applications, and enable new products and services which wouldn’t have been possible without it.

I’ll leave you with a tongue-in-cheek quote from Douglas Adams:

“Meanwhile, the poor Babel fish, by effectively removing all barriers to communication between different races and cultures, has caused more and bloodier wars than anything else in the history of creation.”

Is A.I. Becoming too Expensive ?

The Story:

The last few years we have seen impressive breakthroughs in AI; notably within the field of Natural Language Processing. NLP has brought forth impressive tools, such as automatic code-completion and automatic text generation (no worries, developers are still in high demand).

These breakthroughs have been possible because of the emergence of extremely large language models such at GPT-3, which have been trained on truly massive datasets. These models are in fact so large, and take such expertise to develop and deploy, that they are very expensive to run. And that is one of the reasons why OpenAI decided to make GPT-3 available through their commercial API, so anyone can use it for a reasonable fee… or can they?

It turns our that service providers exposing these language models charge fees which are prohibitively expensive to most companies, meaning only very large or very profitable companies can use them to improve their products.

To make matters worse, researchers are already working on the next generation of models, which will be significantly larger and even more expensive to run (GPT-4 will be 500 times the size of GPT-3).

All of this raises the question: is A.I. becoming too expensive? My answer: perhaps, but only the very cutting edge of AI.

The good news is that in 90% of the cases, you don’t need bleeding edge technology.

Many open-source resources do exist, and will continue to be developed, that are more than capable to solve the business problems most companies face.

It’s just a matter of making the right trade-off in terms of accuracy, speed, and cost.

Why this matters:

You may wonder why everyone is desperately trying to train ever larger and more performant language models.

Is there more going on here than just product innovation? There is.

The scramble for size and performance appears to be driven by a belief in the scaling hypothesis, and the hopes of running into Artificial General Intelligence (AGI) by simply throwing massive amounts of money at the problem.

It is no exaggeration to say that there is an arms-race going on: China and the USA are investing massively in order to be the first to crack the puzzle of AGI, and very large language models seem to be the horse everyone is betting on right now.

Of course not everyone thinks this is a good idea.

To quote Stuart Russell, a computer science professor at Berkeley and AI pioneer:

“focusing on raw computing power misses the point entirely […] We don’t know how to make a machine really intelligent — even if it were the size of the universe.”

Time will tell who is right, but in the meanwhile the economic and environmental impact of these efforts cannot be overstated.

And that, perhaps, should give us pause.

Grammarly raises $200M to make you an even better writer using AI

The Story:

Grammarly, which makes artificially intelligent software that helps improve people’s writing, has raised $200 million in funding. New investors include Baillie Gifford and funds and accounts managed by BlackRock. The company plans to use the investment to accelerate product innovation and team growth.

Grammarly works across more than 500,000 applications and websites. The company says as more people are connecting across more online platforms, it’s important to get communication right. Baillie Gifford says Grammarly is one of the few businesses in the world focused on solving this problem.

Why this matters:

You might think “great, another AI powered spellchecker”. But Grammarly is much more than that : it uses machine learning to assist not only with basic writing, but also spell-check, grammar, tone of language and context. In other words : it improves the quality of our written communication.

Hitting the right tone in an email, avoiding grammar mistakes in your professional communications: all of these things are vital to being taken seriously and unlocking professional opportunities.

For non-native English speakers, and even many native speakers from more diverse backgrounds, Grammarly really levels the playing field, and allows them to compete more effectively in business and in entrepreneurship!

Since I’ve been focused on language this week, Evaluating Large Language Models Trained on Code by Chen et al. seems like a nice pick for this week’s Awesome Paper.

This is the work behind GitHub Copilot, the AI system created by OpenAI that synthesizes code to match a user-provided contextual input.

Although it’s a very accessible paper, and I recommend reading it, you can also check out the team’s very engaging video explanation below 😍

Streamlit: A Fast Way to Build and Share Data Apps

I’ve recently been playing around with Streamlit to publish some of my models on HugginFace Spaces, and really love it!

To cite the teams original blogpost from ‘19:

“We wanted machine learning engineers to be able to create beautiful apps without needing a tools team. These internal tools should arise as a natural byproduct of the ML workflow. Writing such tools should feel like training a neural net or performing an ad-hoc analysis in Jupyter! At the same time, we wanted to preserve all of the flexibility of a powerful app framework. We wanted to create beautiful, performant tools that engineers could show off.”

I think Streamlit definitely lives up to that promise: plotting, and even creating user interfaces, is made incredibly simple. In most cases as simple as printing to the standard output!

One of the things I like the most, though, is that Streamlit provides a super simple caching mechanism that allows your app to stay performant even when loading data from the web, manipulating large datasets, or performing expensive computations.

Adding caching is as simple as adding @st.cache decorator (see image below).

I guess what I’m trying to say is that I love Streamlit and I think you might too!

This newsletter is made possible by Astek Alpha, a next generatione data-science and machine learning consultancy.

Looking to transform your data into value ?

Wondering what machine learning could do for your company ?

Trying to scale your A.I. solutions ?